Work Term 1 - UofG Athletics

About the Position

I was hired on as an AODA and Website Analyst for the University of Guelph’s Athletic Department. The AODA, or Accessibility for Ontarians with Disabilities Act, is a 2005 statute which outlines the responsibilities for providing barrier free accessibility for persons with disabilities who are studying, visiting, and working at the University. Aside from considerations like physical accessibility which may first come to mind, such as ramps, employment policies and the like, this legislation also outlines responsibility for web accessibility. Compliance is mainly measured against the WCAG 2.0, or web content accessibility guidelines. It was my job to audit and remediate the Athletic Department’s websites against this standard and to help develop a strategy for providing an accessible web presence for all members of the community with the department’s content creators.

In addition to my accessibility remediation duties there were some interesting problems that came up during my work term that provided opportunities to apply my technical skillset in interesting ways. Some of these projects included figuring out how to upload 150k client images into a database which didn’t provide any mechanism for batch importing, creating digital signage using scheduling data from a flash-based web application, and using Selenium and SilukiX to programmatically fix the HTML markup of over 4500 news articles which were only accessible through an online WYSIWYG editor.

AODA Analyst

Web Accessability Conference

Before my work term, I admittedly had never given much thought to web accessibility. In a user interface design class, we are introduced to accessibility as a principle of design as one consideration of hundreds to improve user experience. Web accessibility means something different than this context however and has a much more profound impact on how many people interact with the web.

One of the highlights of my work term was having an opportunity to attend the third annual University of Guelph Accessibility Conference, which over two days filled Rozanski Hall with back-to-back presentations from North America’s leaders and experts in accessibility. The conference was really eye opening and helped provide perspective for the work that I was undertaking.

Gryphons.ca and Friends

I had the opportunity to join the department at a very exciting time. For one, the department’s main website, gryphons.ca was getting a major overhaul. The varsity sports page, which was home to around 5000 sports stories and all the university’s varsity pages was getting a complete redesign. Secondly, the school was finally getting ready to unveil its new 170-thousand square feet athletic center and was releasing a whole new website for its fitness and recreation services.

The University’s Computing and Communications Services (CCS) department has done a great job at getting most of University’s department sites hosted on a common Drupal-based framework. Aside from looking great, being open source, and providing a consistent user experience, it is fully WCAG 2.0 Level AA compliant which is above and beyond the current AODA requirement.

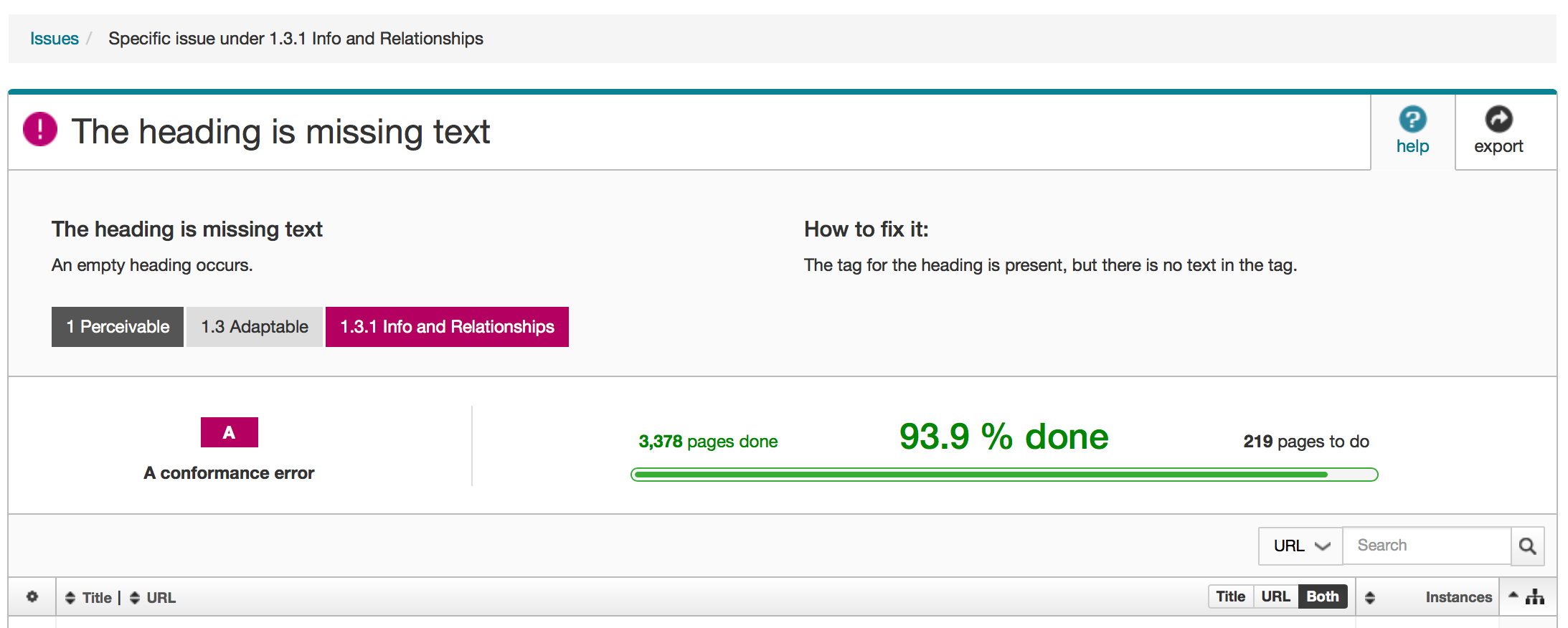

The Athletic department had to look elsewhere to support functionality like its online store, ticket sales, and real-time rosters, however. All in all there were about a half dozen separate publically facing sites that which are individually managed and account for around 50 thousand pages in all. It wouldn’t be practical to sit around looking at the markup of each page one at a time so luckily I had diagnostic tools like SiteImprove which helped make auditing them more manageable. SiteImprove is a web governance tool which can be used to crawl an entire site and perform automated WCAG 2.0 compliance validation.

As great as SimeImprove is, much of the WCAG 2.0 guidelines are subjective and can’t be assessed using an automated tool alone. Looking back, finding the issues was the easy part, the real work began with trying to make sense of them, finding their respective causes, and creating an actionable plan to address them.

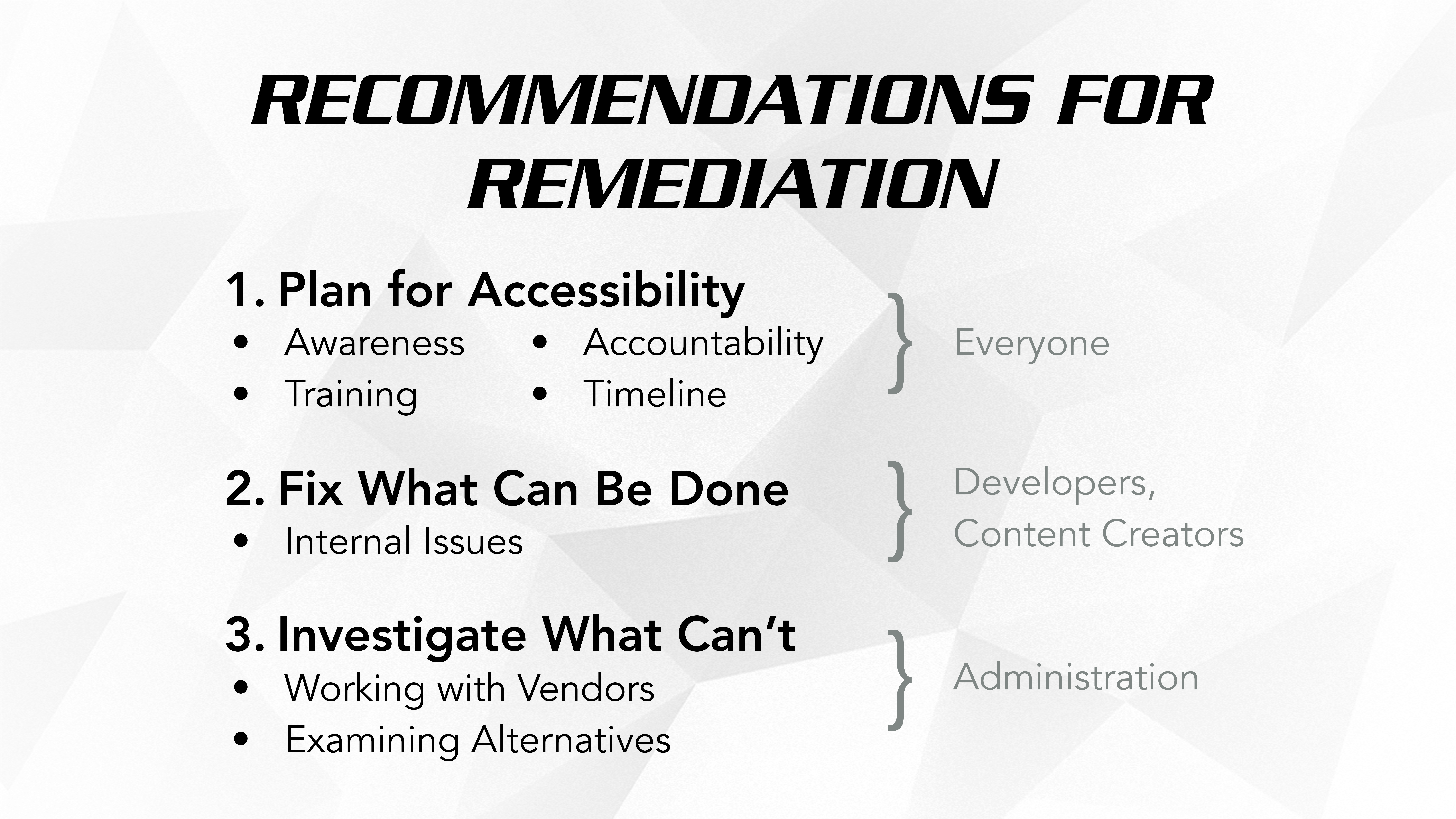

My first two weeks were spend mostly cataloging, categorizing and prioritizing these issues to, the result on which was a detailed 30-page, 10 thousand or so word report, which I then condensed into a 10-minute presentation for the marketing and communications team. I also created a Kanban board using Jira, and a training module called CLASH which went over best practices for the department. Below is a slide from my presentation where I go over my action plan.

Web Development

Digital Signage

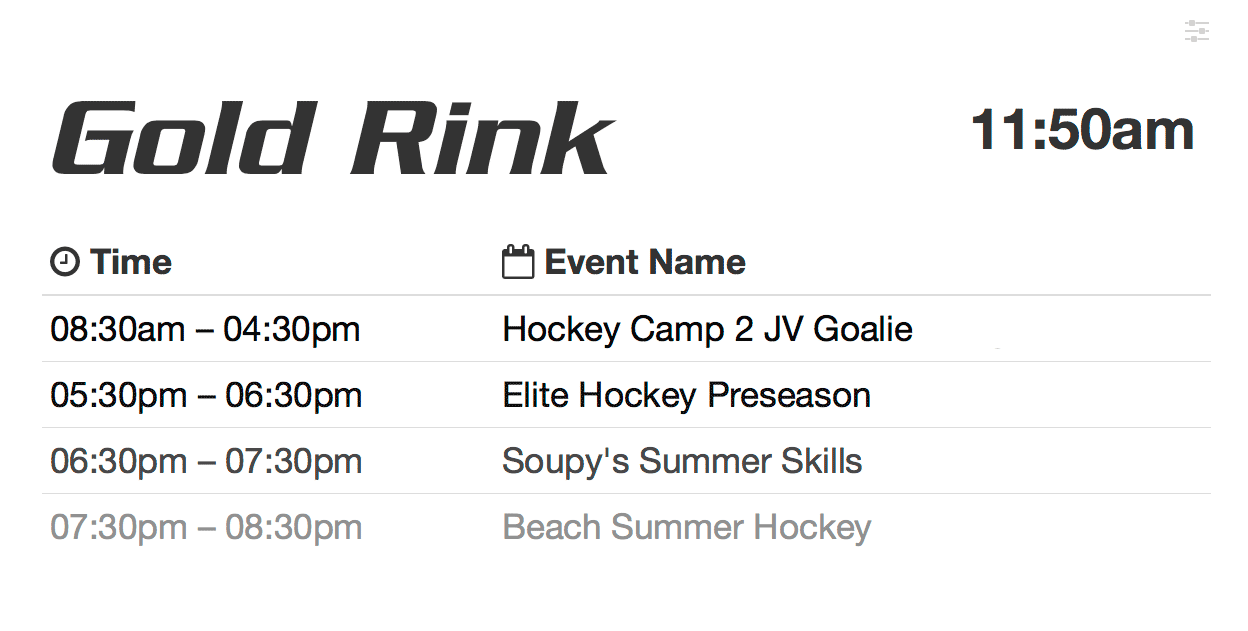

One of my favorite projects that I had a chance to work on was gryph-display-panel (GDP). It started as a test in scraping data from ActiveNet, the web service Athletics uses for scheduling, but ended up turning into a fully featured digital signage solution which will be deployed throughout the new athletic center using RaspberryPis and Dell touchscreen displays.

The project gave me exposure to PHP and Apache on the backend, as well as gave me a chance to use other tools, languages and frameworks like JavaScript, Bootstrap, SASS, Gulp, and Bower on the front end. gryph-display-panel is open source and hosted on GitHub.

Nearing the end of my work term I was also recruited to work on a separate digital signage project meant to display media clips, weather, and facility scheduling for other areas of the building. The department hired Yohan, a motion graphics artist to create video content which would loop through the display. We used a service called TightRope Carousel to manage the media and BrightSign media players to play it. I created a quick little youtube video tutorial demoing the setup of the system. A similar setup is currently being used for static images in some of the Engineering and Business buildings and soon will include the University Centre as well. The hope is to get the other University departments on board and that media assets can be shared between them so that for instance, an advertisement for an upcoming concert in the UC could be shown in Athletics, conversely a football game could be advertised in the UC, or say an emergency weather alert could be displayed campus wide.

Uploading User Images

What seemed like a trivial issue turned into something much more involved. Starting off there was the task of procuring the images themselves. Every student and staff member must provide their photograph in order to get their respective ID cards. These images are managed by the physical resource department who enter them into a relational database as blob data. What I suspect amounted to only typing a few SELECT statements turned into a grulling 4-month gauntlet of interdepartmental beurocracy and politics. Anyway about two weeks before the end of my work term I had access to a dump of about 150 thousand student, staff, and other miscellaneous images named with their respective ID numbers.

After obtaining and organizing the images I still had to figure out a way to upload them. The problem was that the front desk operations software that the images would need to be uploaded into had no means of doing any sort of batch image importing– you had to log into their admin site and upload them one at a time through a Windows-only Java applet in the browser. This process took around 2-3 minutes per image.

I decided to see if I could somehow automate this process. In my first attempt I used Selenium and SilukiX to run through the steps using image recognition to follow the steps and prompts just like a human would. This worked well enough running over the weekend but was still too slow. On my second attempt I went back to the Java applet and decided to see if I could reproduce the uploading process directly by decompiling the Java applet and adding the needed functionality. A java applet is just a Jar file, which itself a zipped directory of Java classes. Java class files are only compiled into bytecode for the JVM rather than assembly as is the case in other compiled languages like C. This process preserves metadata such as class names, method names, and field types, allowing the source code to be reversed engineered. Comments and variable names are lost during this process so it can be somewhat tedious process however.

After tracing through the code a few times I was able to isolate the mechanism that facilitated image uploading. In order to reproduce this process, I just needed to generate session keys which are stored as cookies after a successful login and pass them as arguments. Using Apache’s HttpComponents I was able to reproduce the POST requests required to login and capture these keys. I adapted ActiveNet’s own code into a Runnable class which allowed me to upload multiple images concurrently on multiple threads, all the while logging the results into a MySQL database which could be queried to see whos photo had been uploaded and skip reuploading images on subsequent runs. What would have manually taken a little over 3 years working a 40h work week took about half an hour to complete. Now, chances are provided at some point you’ve had university ID, that the next time you swing by the athletic desk your face will pop up when you swipe in. active-photo-sync is open source and hosted on GitHub.

Automating Accessibility

Although I conceeded to the fact that it wouldn’t be practical to manually edit the source code 50k web pages, be it for time restrictions or for the sake of my sanity, I decided to try and see what I could do using what I’ve learned using Selenium and SilukiX to fix what I could programatically. While reviewing pages I noticed certain recurring bad practices, such as using depricated tags like <font> and <bold>, empty or out of order heading tags, using images with embedded text in lieu of just using text, etc. After making a list of the worst offenders I wrote a tool in Python using to iterate through the pages and fix them. Code fixing code– meta. It was I wont throw up the source code for this one since it was, quick and dirty, but it demonstrably worked well.

Acknowledgments

I’d like to thank Kevin, Bill, Jen, Leslie, Andrew, Yohan and everyone else for making my stay in Athletics a welcoming environment to work in and for student affairs for providing the funding which made this position possible.

I’d also like to give a shout out to my supervisor Sean, who was a great boss and an overall great guy to share a cubicle with. Despite being a pretty busy guy with everything going on with the new building, having a newborn, and generally being a one-man IT department, he was always approachable when I needed help or advice and was genuinely invested in making my coop experience a meaningful one.

Lastly, I’d like to especially heartfelt thank you to my coop coordinator Laura Gato and Sean for going to bat for me when I found out that Kaleidescape was closing down a week before I was slated to start my next work term. I only had about two weeks to find a job or I was out of coop and by that point every relevant posting had been filled months ago. Even if I wanted to enroll in class everything was booked. On top of that, I had just bought a car to commute to Waterloo for the position and I figured in a weeks time I’d probably be sleeping in it down by the river. They left no stone unturned and within 4 days I had secured a job at TransPlus, where I’m happily working now!